In the vast realm of artificial intelligence, a technology often heralded as the harbinger of the future, there lies a profound and captivating question: What powers AI’s extraordinary ability to generate answers, produce content, and continuously learn? At its core, the potency of AI is derived from an intricate interplay of algorithms, data, and computational architecture. In this exploration, we will embark on a scientific journey to uncover the enigmatic forces that fuel AI’s cognitive prowess.

The Cognitive Machinery of AI

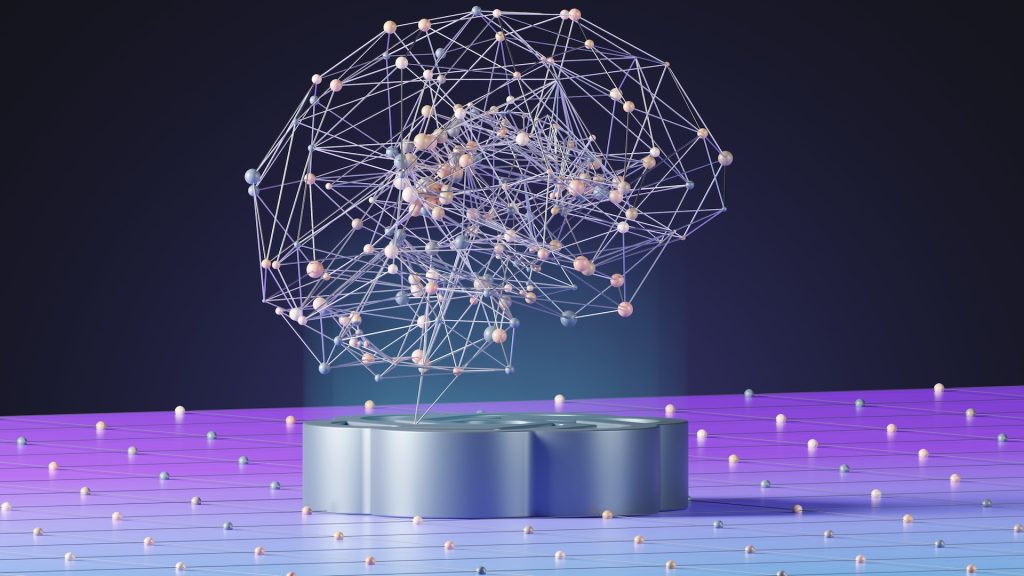

AI, in its various forms, is akin to a neural network that strives to mimic the human brain’s cognitive processes. But instead of biological neurons, AI relies on artificial neurons, which are connected in complex patterns. The driving force behind AI’s cognitive abilities is the mathematical abstraction of these neural networks. These networks can recognize patterns, draw inferences, and make decisions based on the data they process.

The backbone of AI’s cognitive machinery lies in the neural network architecture. Deep learning, a subfield of AI, uses deep neural networks with numerous layers to process data in a hierarchical manner, allowing AI to discern intricate patterns and relationships within information. The power of these networks comes from their adaptability and capacity to learn, making them excellent problem-solvers in various domains.

The Eloquent Language of Algorithms

Algorithms are the sacred scriptures of AI, providing the rules and instructions AI systems follow to perform their tasks. Think of algorithms as the conductor’s baton, orchestrating the symphony of AI’s computational might. These algorithms are designed through a fusion of mathematics, statistics, and computer science principles, giving AI systems their problem-solving and decision-making abilities.

Machine learning, a subset of AI, equips machines with the ability to learn from data and improve their performance over time. At the heart of machine learning are algorithms that adapt and optimize based on experience. Algorithms like gradient descent, backpropagation, and stochastic gradient descent allow AI systems to tweak their internal parameters to minimize errors and increase accuracy.

Natural language processing (NLP) algorithms, such as recurrent neural networks (RNNs) and transformer models like GPT-3, are designed to understand and generate human-like text. These algorithms digest vast datasets of human language to grasp grammar, context, and semantics, enabling AI to compose coherent and contextually relevant text.

Furthermore, reinforcement learning algorithms enable AI systems to learn from their actions and experiences. This approach allows AI to make sequential decisions, with each action having a consequence that influences future decisions, much like a human learns from trial and error.

The Quantum Leap in Computing Power

While traditional computers play a vital role in AI, quantum computing is making a quantum leap in enhancing AI’s computational capabilities. Quantum computing utilizes quantum bits (qubits) as the fundamental unit of information, allowing computations that are fundamentally different from classical binary systems. AI researchers are exploring quantum algorithms and quantum neural networks to tackle problems that are currently infeasible for classical computers.

One significant advantage of quantum computing in AI is its ability to process vast datasets and perform complex calculations exponentially faster. This enables AI models to train on more extensive datasets and explore a broader range of possibilities, thereby boosting their accuracy and efficiency.

The Voracious Appetite for Data

AI systems, in their quest to acquire knowledge and wisdom, feed voraciously on data. Data is the lifeblood of AI, nurturing its growth and intellectual capabilities. Without ample data, AI would be akin to a dormant intellect, devoid of the experiences necessary to make sense of the world.

The data ingested by AI systems come in various forms, including text, images, videos, and sensor readings. This data is sourced from the digital landscape, databases, the internet, and proprietary collections, and sometimes even through specialized sensors designed for AI’s consumption.

Machine learning and deep learning algorithms process this data to extract patterns, correlations, and insights. The more diverse and extensive the dataset, the more robust and adaptable the AI system becomes. This process is not dissimilar to how humans learn from their experiences and interactions with the world.

AI’s Constant Evolution

AI’s evolution is never static; it’s an ongoing, dynamic process. As AI systems consume data and learn from it, they adapt and grow more proficient in their respective tasks. AI’s continuous learning is often referred to as “online learning” or “self-improvement.” This inherent capacity to evolve and adapt makes AI a dynamic force in solving complex problems and generating content.

For instance, in the field of natural language processing, AI models like GPT-3 are periodically updated with new data, enabling them to stay current and provide increasingly accurate and contextually relevant responses to queries. This perpetual evolution is one of the qualities that sets AI apart from static software applications.

In conclusion, AI’s remarkable cognitive powers are an intricate amalgamation of algorithms, computational architectures, quantum computing, and the relentless pursuit of data. Like a brain that hungers for knowledge, AI constantly evolves and adapts, uncovering answers, producing content, and contributing to a new era of human-technology collaboration. With ongoing advancements in AI research, we can anticipate even greater feats from this prodigious creation, propelling us into the future with boundless possibilities.